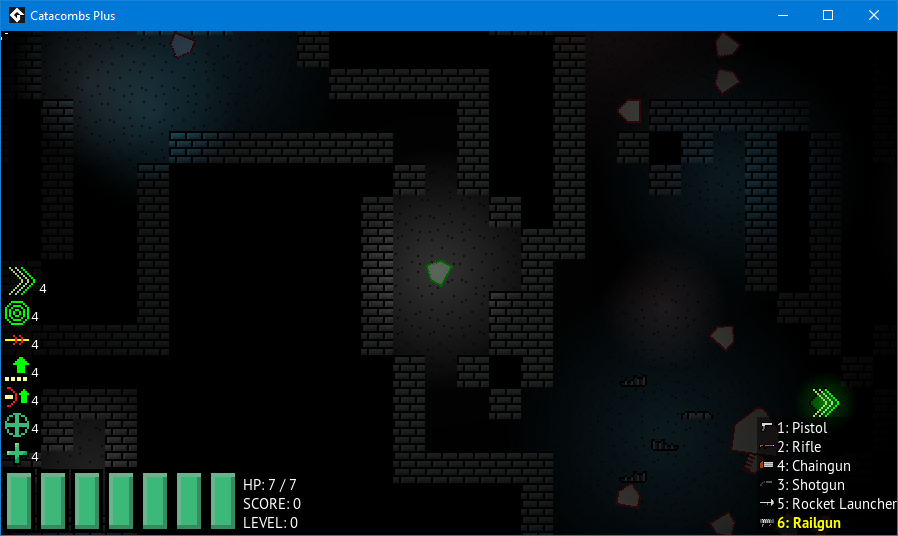

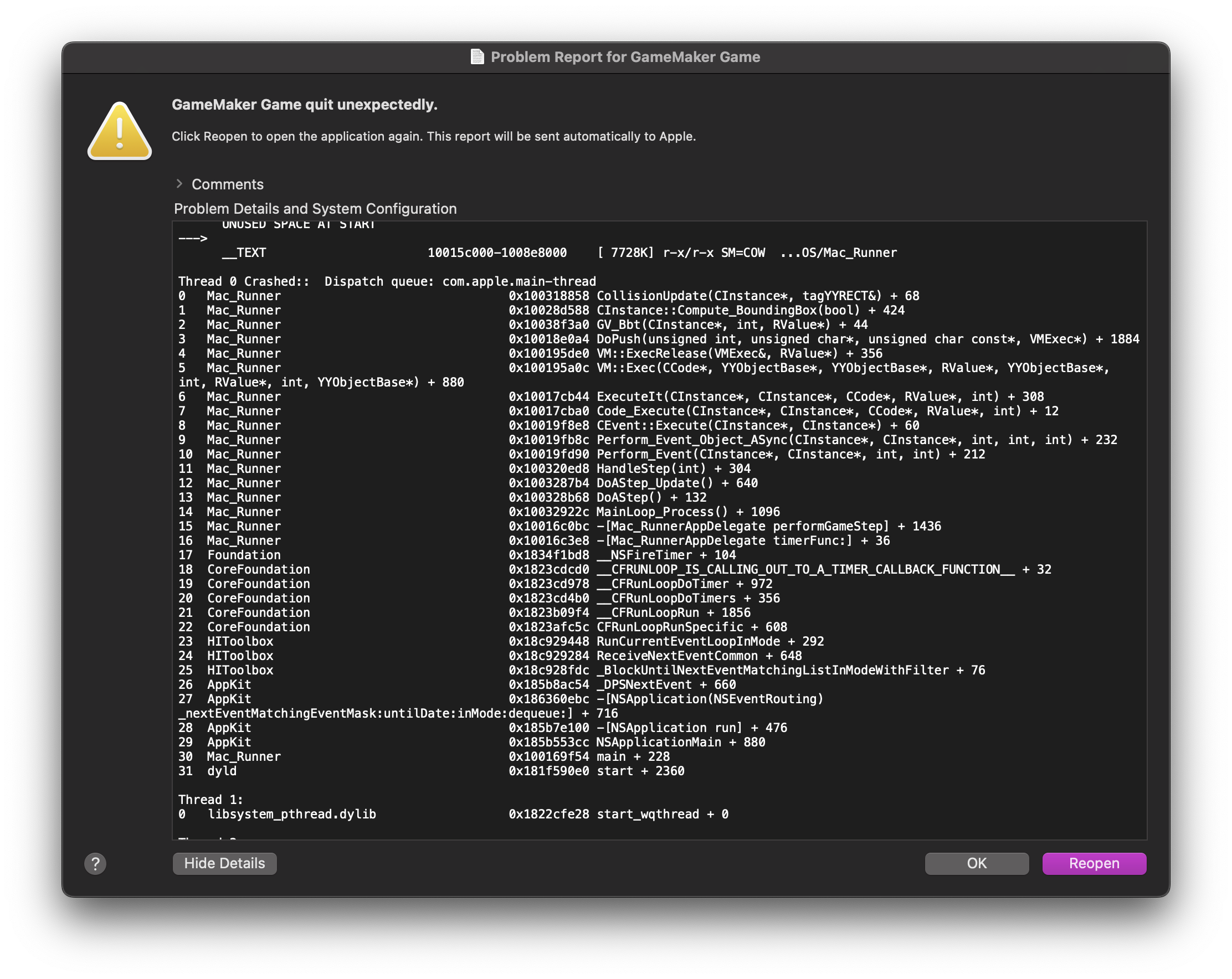

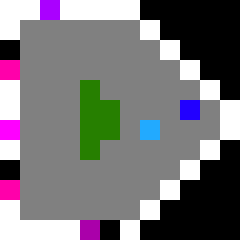

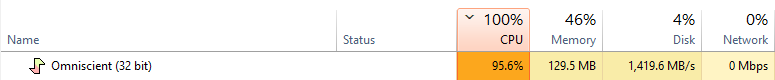

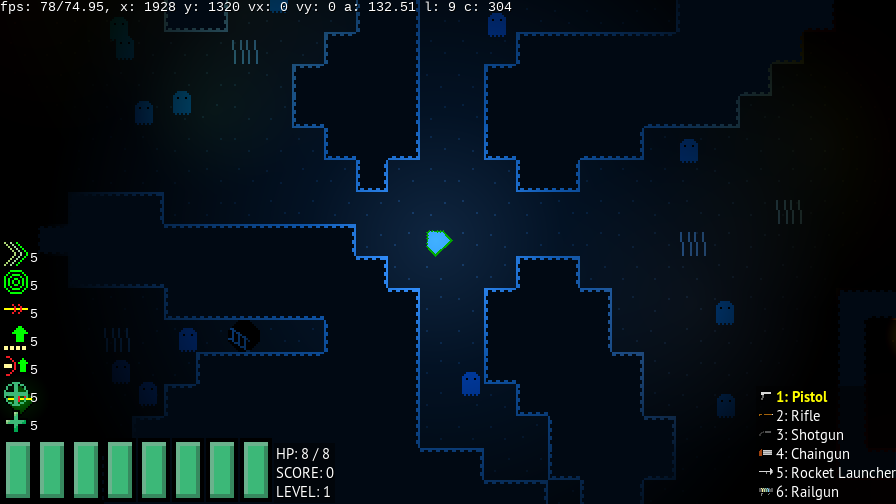

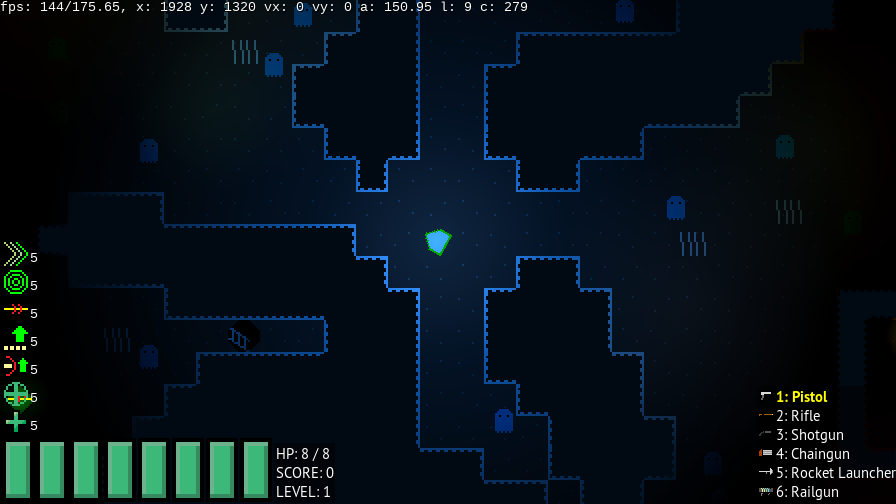

Here’s a screenshot of one of the laggier maps in the game - mostly due to the large number of enemies it spawns:

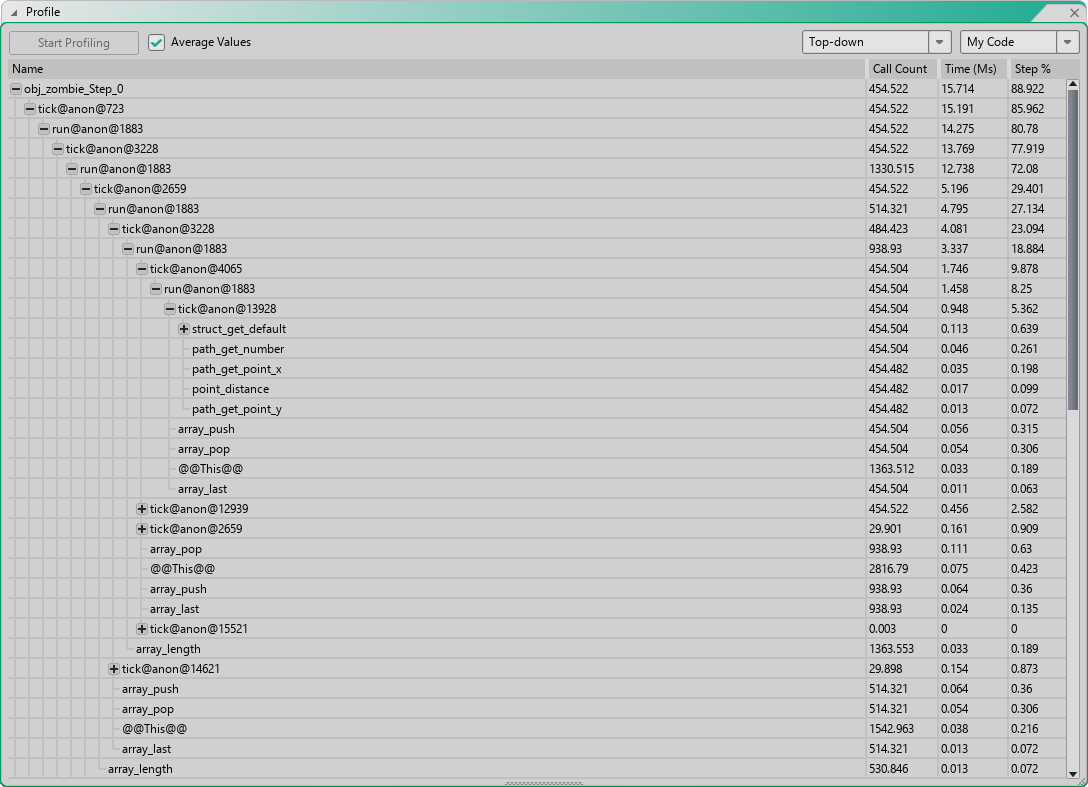

75 FPS average, in a VM build. YYC will compile to native code and be faster, of course. Playable, but I have a 144 Hz monitor! Let’s throw it in the profiler:

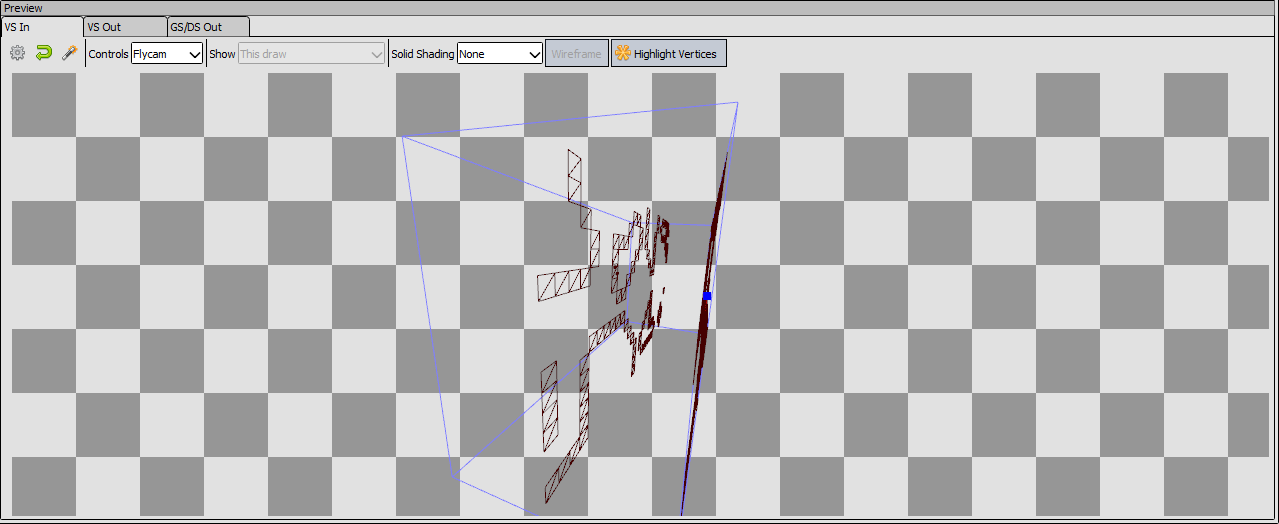

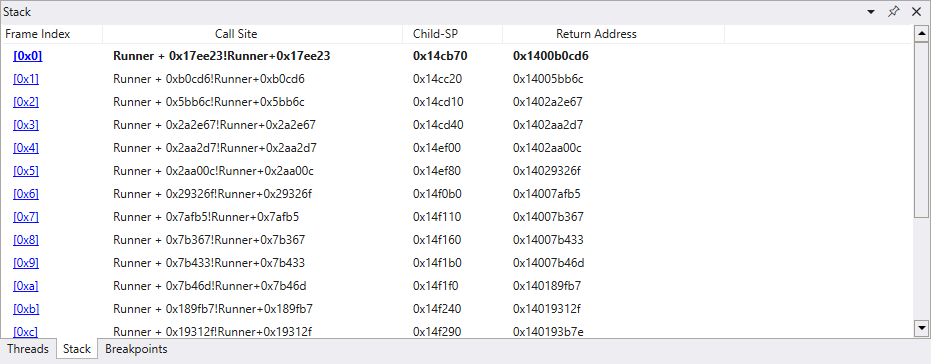

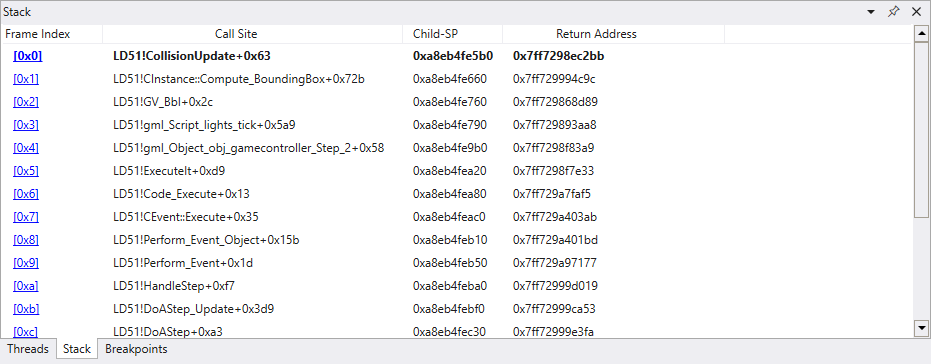

Well, that’s not very helpful - all of the busy code is occuring in structs, but GameMaker’s profiler isn’t preserving the struct names, rendering all that information largely useless. But - we still have the function names, and from that I can still figure something out: It’s all in my AI code! I use a behaviour node system, and each node is a struct with the functions like so:

function BehaviourNode() constructor {

/// @description The actual implementation of a node. Implement this when creating a node, but use `run` to actually execute.

/// Returns a BehaviourState.

/// @param {Struct} info

/// @return {Real}

static tick = function(info) { }

/// @description Executes this node, correctly updating the state of the AI system.

/// This should be used instead of tick, as it allows nodes to correctly use the running state.

/// Returns a BehaviourState.

/// @param {Struct} info

/// @return {Real}

static run = function(info) {

if (array_last(info.system.executionStack) != self) {

array_push(info.system.executionStack, self);

}

var r = tick(info);

if (r != BehaviourState.running) {

array_pop(info.system.executionStack);

}

return r;

}

}

The mess of tick and run shows that the most performance-intensive functions are in the behaviour nodes. Unfortunately, I can’t figure out which, since the struct names are missing… but that’s fine.

I don’t mind having enemies with heavy AI, and I can still get performance gains elsewhere.

First, I added a simple option to each AI system that determines the maximum distance from the player at which the system with stop ticking:

var maxDistance = struct_get_default(options, "maxDistance", 16 * 32);

if (maxDistance > 0 && point_distance(me.x, me.y, obj_player.x, obj_player.y) > maxDistance) {

return;

}

By default, enemies that are further than 32 tiles away from the player will no longer update. This has the side effect of enemies not chasing the player if you run far enough away from them, but otherwise the existing enemies don’t really do anything when you’re far from them, so this is an easy performance win. This can be disabled, and I could easily make it so the AI can control whether this check is enabled based on what it’s doing if I want to.

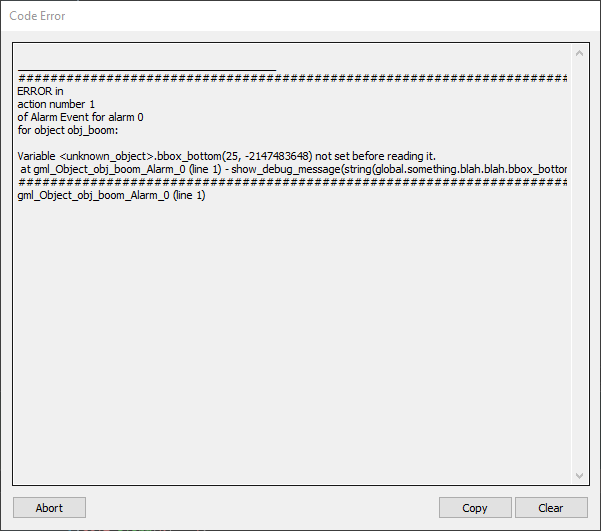

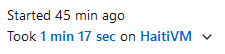

Let’s see what it did:

Up to 175 FPS. Nice.

There’s another small optimisation I can make: spawn less enemies. Not too long ago I replaced the spawning logic from Catacombs 51, which just spawned enemies randomly around the map at generation time, with one that spawns enemies dynamically throughout gameplay. Enemies are spawned around an invisible spawner object when:

- there are no enemies within the spawn radius of the spawner,

- the spawn radius is not within the camera view,

- a random amount of time has passed since the last spawn.

I added another condition which was to check if the spawner was no more than 16 tiles away from the camera:

var distance = point_distance(obj_player.x, obj_player.y, self.x, self.y);

if (distance > 16 * (max(camW, camH) + 16)) {

return;

}

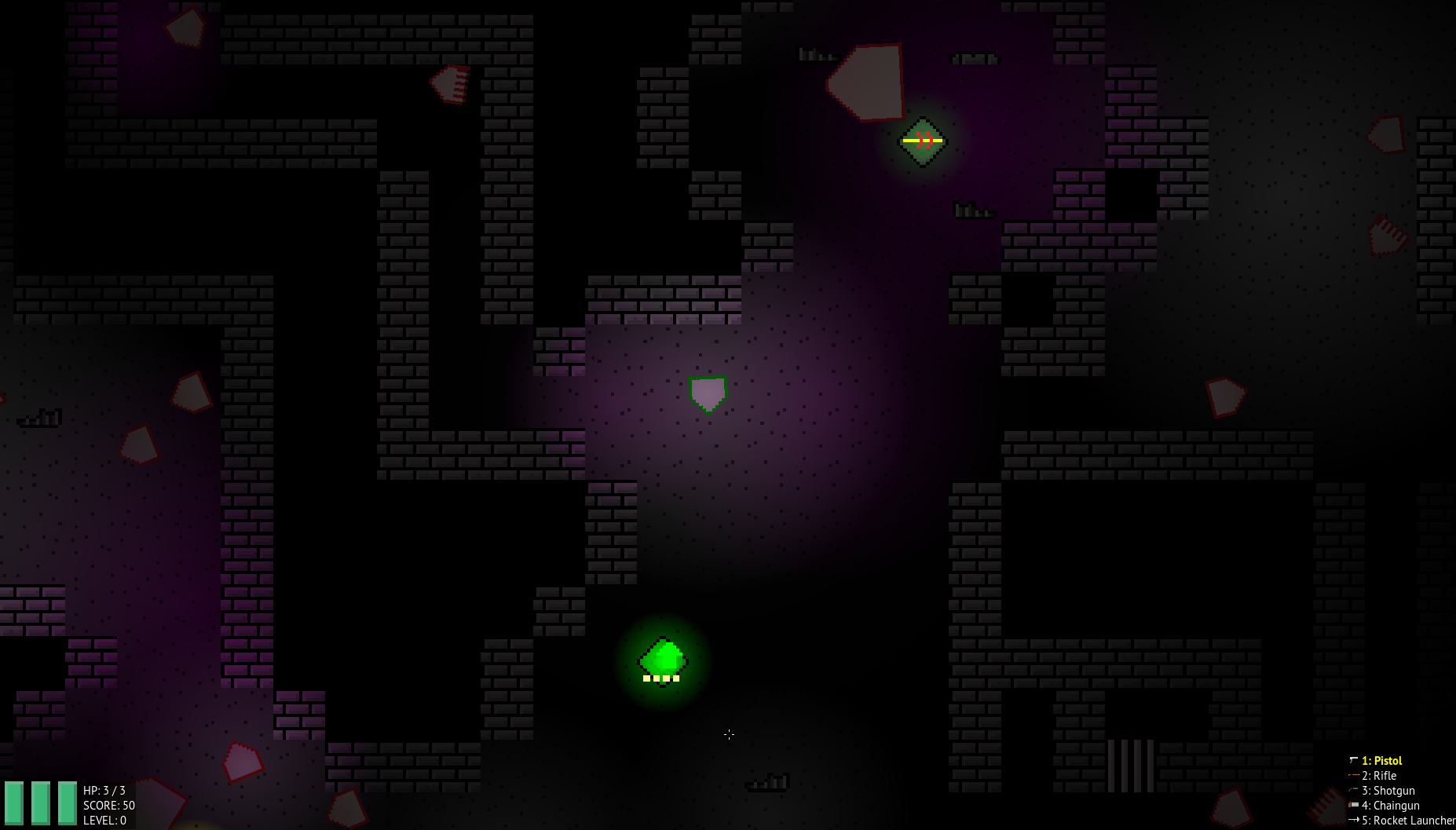

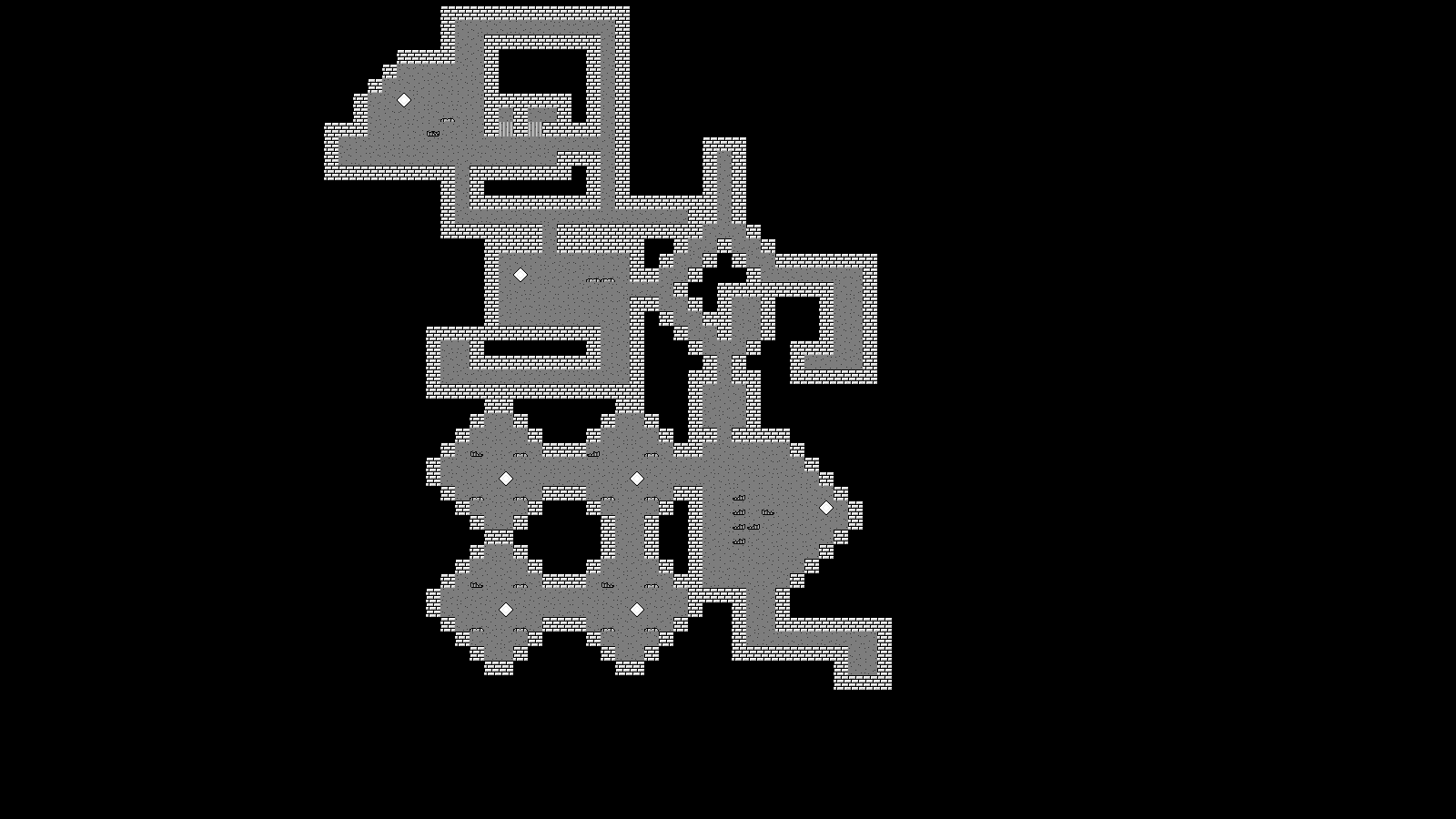

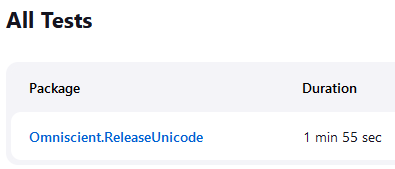

The performance improvements are slight but noticeable:

Up to around 190-200 FPS now, a definite improvement from where we started. As you progress through the later levels this gets even more noticeable, as higher levels spawn more enemies. This would slow down the game to comically low framerates before, but now higher levels can easily stay within hundreds of FPS.

As mentioned above, 75 to 190 FPS is about 2.5× faster. Pretty good I’d say.

Development on Catacombs Plus is slow-going at the minute, because I’m lazy, not entirely well, and distracted with other things, but things are still happening.

]]>